Combining simulation with optimization procedures enables one to investigate complex real-world problems under varying settings and scenarios. Implementation, however, is challenging as trade-offs between time spent optimizing and estimating the quality of a solution has to be considered.

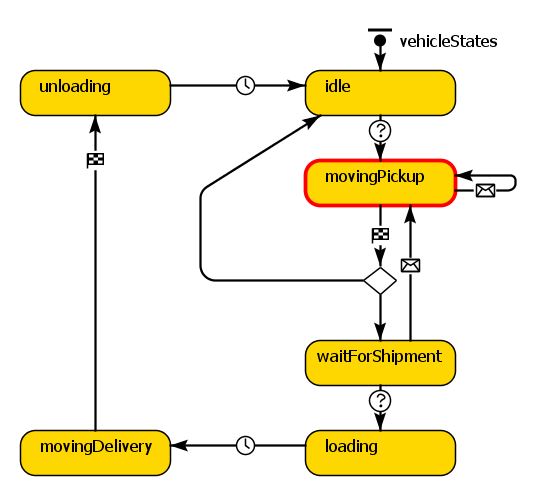

I mostly use agent-based simulations (for an excellent article introducing agent-based simulations refer to Macal, 2016) and metaheuristic solution procedures to optimize problem settings where uncertainty and dynamics are present in the systems. Examples include sudden road and rail closures due to disasters.

In such settings, the simulations require substaintial time to estimate the quality of a single solution. The more simulation runs, i.e. replications, are performed, the better is the estimation of the objective value. More replications, however, result in less time for optimization iterations. Consider the following setting:

- Given a minimization problem, the estimated quality of the current best solution is 10, calculated from 2,500 simulation runs. A new solution is generated and after 200 runs, the estimated objective value is 15 with the best run returning a value of 9. Should the evaluation be aborted or should the algorithm continue spending time evaluating the remaining 2,300 replications?

Based on the expected values, the answer is probably not. Nevertheless, it highly depends on the uncertainty in the system. Therefore, spending the correct amount of time in the simulation is crucial.

Multiple ways of combining simulation with optimization are introduced in Juan et al. (2015). In Deckert and Klein (2014), various strategies to dynamically control the time spent to evaluate the quality of a single solution, i.e. the number of replications run, are introduced. In the simulation software AnyLogic, an option to run a varying number of replications is given (see Properties – Replications), however, I prefer to implement a custom version with a Custom Experiment.It provides more flexibility and allows one to collect statistics on strengths and weaknesses of the employed methods to generate new findings and investigate such trade-offs.

References:

Deckert, A., & Klein, R. (2014). Simulation-based optimization of an agent-based simulation. NETNOMICS: Economic Research and Electronic Networking, 15(1), 33-56.

Juan, A. A., Faulin, J., Grasman, S. E., Rabe, M., & Figueira, G. (2015). A review of simheuristics: Extending metaheuristics to deal with stochastic combinatorial optimization problems. Operations Research Perspectives, 2, 62-72.

Macal, C. M. (2016). Everything you need to know about agent-based modelling and simulation. Journal of Simulation, 10(2), 144-156.

0 comments on “Simulation Optimization and the Trade-Off between Optimizing and Evaluating”